Bert finetune1/4/2023

Bert finetune movie#In this blog, I will go step by step to finetune the BERT model for movie reviews classification(i.

Our BERT encoder is the pretrained BERT-base encoder from the masked language modeling task (Devlin et at., 2018). BERT is a state-of-the-art model by Google that came in 2019. Used two different models where the base BERT model is non-trainable and another one is trainable. Further Pre-training the base BERT model 2. The BERT summarizer has 2 parts: a BERT encoder and a summarization classifier. Fine-Tuning Approach There are multiple approaches to fine-tune BERT for the target tasks. To fine-tune our network, we need somehow to tell our network which sentence. Bert finetune how to#For more information about how to configure experiments, check out the Determined experiment configuration documentation. In this section we will explore the architecture of our extractive summarization model. As a state-of-the-art language model pre-training model, BERT (Bidirectional Encoder Representations from. We feed the input sentence or text into a transformer network like BERT. If you want to modify the experiment, say to modify hyperparameters or the duration of training, you can easily make changes to this file.

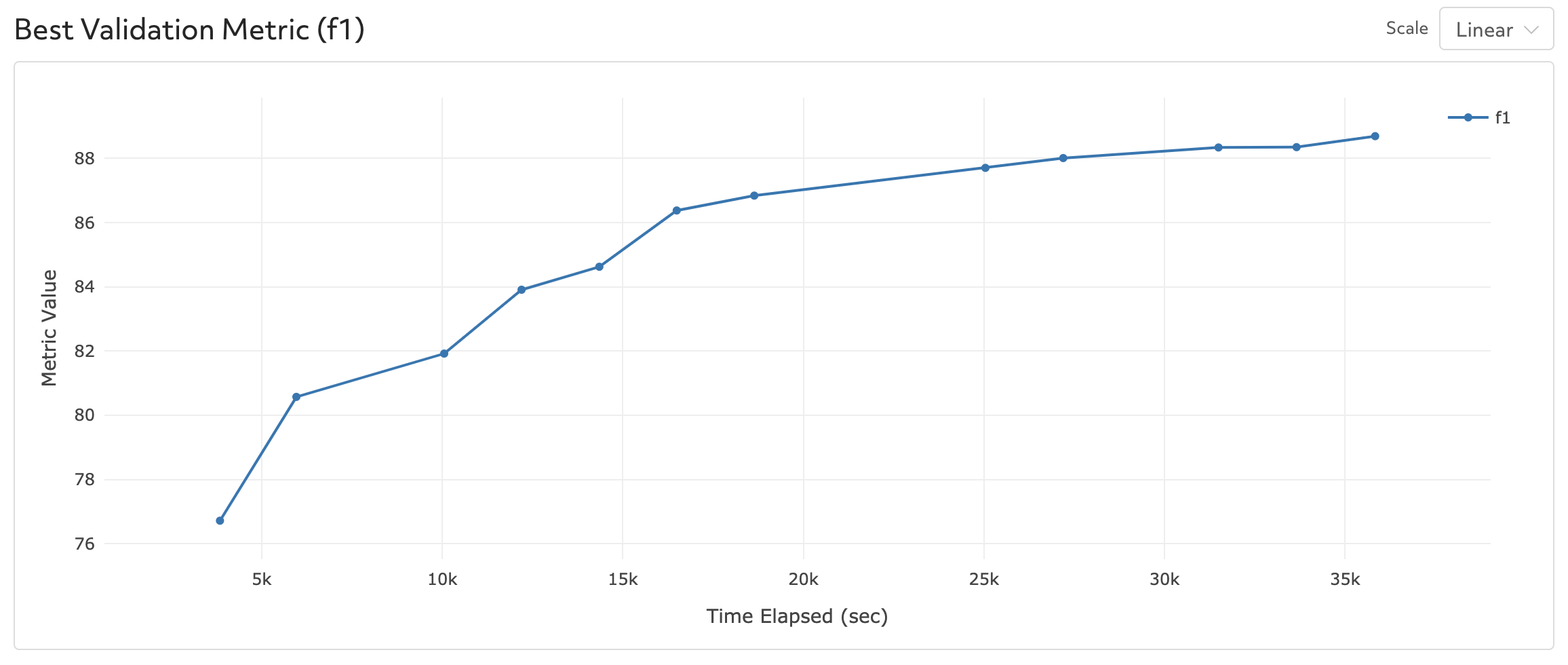

The task is to classify the sentiment of COVID. We will fine-tune BERT on a classification task. It has a huge number of parameters, hence training it on a small dataset would lead to overfitting. we will see fine-tuning in action in this post. Fine-tuning BERT model for Sentiment Analysis Last Updated : 02 Mar, 2022 Google created a transformer-based machine learning approach for natural language processing pre-training called Bidirectional Encoder Representations from Transformers. It can be pre-trained and later fine-tuned for a specific task. Description : Bert_SQuAD_PyTorch hyperparameters : global_batch_size : 12 learning_rate : 3e-5 lr_scheduler_epoch_freq : 1 adam_epsilon : 1e-8 weight_decay : 0 num_warmup_steps : 0 max_seq_length : 384 doc_stride : 128 max_query_length : 64 n_best_size : 20 max_answer_length : 30 null_score_diff_threshold : 0.0 max_grad_norm : 1.0 num_training_steps : 15000 searcher : name : single metric : f1 max_length : records : 180000 smaller_is_better : false min_validation_period : records : 12000 data : pretrained_model_name : " bert-base-uncased" download_data : False task : " SQuAD1.1" entrypoint : model_def:BertSQuADPyTorch Bidirectional Encoder Representations from Transformers (BERT) is a state of the art model based on transformers developed by google.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed